My name is Xiaogeng Liu, currently a second-year Ph.D. student in Information Science at the University of Wisconsin-Madison. I am honored to conduct my research under the esteemed guidance of Professor Chaowei Xiao, who specializes in security, privacy, and machine learning, with the goal of building socially responsible machine learning systems. I obtained my Master’s degree from Huazhong University of Science and Technology in 2023, and was fortunate to be a member of TAI group, mentored by Professor Shengshan Hu. I am honored to be awarded the NVIDIA 2025-2026 Graduate Fellowship.

My research interests lie in trustworthy AI, especially the robustness of machine learning models that emphasizes the model’s ability to maintain performance and resist any kind of attacks or unexpected inputs. I have published several papers at the top international AI conferences with total google scholar

🔥 News

(* represents equal contribution)

-

[2024-12] 🎉🎉 I am honored to be awarded the NVIDIA 2025-2026 Graduate Fellowship! I cannot fully express my gratitude to everyone who has supported me—my peers, my collaborators, my advisor, and all who have offered their guidance along the way.

-

[2024-08] 🎉🎉 Our paper about understanding jailbreaking attacks wins the Distinguished Paper Award in 33rd USENIX Security Symposium (USENIX Security'24)!, thanks for all of my collaborators.

-

[2024-07] One paper is accepted by COLM 2024, thanks for all of my collaborators.

"JailBreakV-28K: A Benchmark for Assessing the Robustness of MultiModal Large Language Models against Jailbreak Attacks"

Weidi Luo*, Siyuan Ma*, Xiaogeng Liu*, Xiaoyu Guo, Chaowei Xiao. -

[2024-07] One paper is accepted by ECCV 2024, thanks for all of my collaborators.

"AdaShield: Safeguarding Multimodal Large Language Models from Structure-based Attack via Adaptive Shield Prompting"

Yu Wang*, Xiaogeng Liu*, Yu Li, Muhao Chen, Chaowei Xiao. -

[2024-02] One paper is accepted by USENIX Security 2024, thanks for all of my collaborators.

"Don’t Listen To Me: Understanding and Exploring Jailbreak Prompts of Large Language Models"

Zhiyuan Yu, Xiaogeng Liu, Shunning Liang, Zach Cameron, Chaowei Xiao, Ning Zhang. -

[2024-01] One paper is accepted by ICLR 2024, thanks for all of my collaborators.

"AutoDAN: Generating Stealthy Jailbreak Prompts on Aligned Large Language Models"

Xiaogeng Liu, Nan Xu, Muhao Chen, Chaowei Xiao. -

[2023-07] One paper is accepted by S&P 2024, thanks for all of my collaborators.

"Why Does Little Robustness Help? A Further Step Towards Understanding Adversarial Transferability"

Yechao Zhang, Shengshan Hu, Leo Yu Zhang, Junyu Shi, Minghui Li, Xiaogeng Liu, Wei Wan, Hai Jin.

More

-

[2023-07] One paper is accepted by ACM MM 2023, thanks for all of my collaborators.

"PointCRT: Detecting Backdoor in 3D Point Cloud via Corruption Robustness"

Shengshan Hu, Wei Liu, Minghui Li, Yechao Zhang, Xiaogeng Liu, Xianlong Wang, Leo Yu Zhang. -

[2023-02] One paper is accepted by CVPR 2023, thanks for all of my collaborators.

"Detecting Backdoors During the Inference Stage Based on Corruption Robustness Consistency"

Xiaogeng Liu, Minghui Li, Haoyu Wang, Shengshan Hu, Dengpan Ye, Hai Jin, Libing Wu, Chaowei Xiao. - [2022-09] Our team (Haoyu Wang, Xiaogeng Liu, Yechao Zhang, Ziqi Zou, Xianlong Wang) at AISC2022-Physical World Adversarial Face Recognition (Rank 5/178).

- [2022-06] Our team (Haoyu Wang, Xiaogeng Liu, Yechao Zhang, Shengshan Hu) wins the third prize at CVPR2022 Art Of Robustness Workshop: Open-Set Defence (Rank 3/156).

-

[2022-02] One paper is shared at CVPR 2022 Art Of Robustness Workshop, thanks for all of my collaborators.

"Towards Efficient Data-Centric Robust Machine Learning with Noise-Based Augmentation"

Xiaogeng Liu, Haoyu Wang, Yechao Zhang, Fangzhou Wu, Shengshan Hu. - [2022-09] I am awarded the Chinese National Scholarship for Graduate Students (Top 1%)!.

-

[2022-02] One paper is accepted by CVPR 2022, thanks for all of my collaborators.

"Protecting Facial Privacy: Generating Adversarial Identity Masks via Style-Robust Makeup Transfer"

Shengshan Hu, Xiaogeng Liu, Yechao Zhang, Minghui Li, Leo Yu Zhang, Hai Jin, Libing Wu. - [2022-01] Our team (Haoyu Wang, Xiaogeng Liu, Yechao Zhang) at Tianchi: AAAI2022 Secure AI Challenger Program Phase 8: Data-Centric Robust Machine Learning Competition (Rank 8/3691).

- [2021-10] Our team (Haoyu Wang, Xiaogeng Liu, Yechao Zhang) at OPPO AI Challenge - Face Recognition Competition (Rank 14/2349).

-

[2021-04] One paper is accepted by ACM MM 2021, thanks for all of my collaborators.

"Advhash: Set-to-set Targeted Attack on Deep Hashing with One Single Adversarial Patch"

Shengshan Hu, Yechao Zhang, Xiaogeng Liu, Leo Yu Zhang, Minghui Li, Hai Jin.

💥 Preprints

AutoDAN-Turbo: A Lifelong Agent for Strategy Self-Exploration to Jailbreak LLMs

Xiaogeng Liu*, Peiran Li*, Edward Suh, Yevgeniy Vorobeychik, Zhuoqing Mao, Somesh Jha, Patrick McDaniel, Huan Sun, Bo Li, Chaowei Xiao

- We propose AutoDAN-Turbo, a black-box jailbreak method that can automatically discover as many jailbreak strategies as possible from scratch, without any human intervention or predefined scopes (e.g., specified candidate strategies), and use them for red-teaming. Notably, AutoDAN-Turbo achieves an 88.5 attack success rate on GPT-4-1106-turbo. In addition, AutoDAN-Turbo is a unified framework that can incorporate existing human-designed jailbreak strategies in a plug-and-play manner. By integrating human-designed strategies, AutoDAN-Turbo can even achieve a higher attack success rate of 93.4 on GPT-4-1106-turbo.

- It is the strongest jailbreak attack in Harmbench.

InjecGuard: Benchmarking and Mitigating Over-defense in Prompt Injection Guardrail Models

Hao Li*, Xiaogeng Liu*, Chaowei Xiao

- We propose InjecGuard, a lightweight model designed to defend against prompt injection attacks. It delivers strong performance across benign, malicious, and over-defense accuracy metrics, surpassing existing guard models such as PromptGuard, ProtectAIv2, and LakeraAI. Despite its compact size, with model parameters of only 184MB, InjecGuard achieves competitive performance comparable to advanced commercial large language models like GPT-4.

- We also introduce NotInject, an evaluation dataset that systematically measures over-defense across various prompt guard models.

📝 Selected Publications

AutoDAN: Generating Stealthy Jailbreak Prompts on Aligned Large Language Models

Xiaogeng Liu, Nan Xu, Muhao Chen, Chaowei Xiao

- This pioneering work focuses on the adversarial robustness of the safety alignment of LLMs, and introduces AutoDAN, a novel hierarchical genetic algorithm that automatically generates stealthy jailbreak prompts for LLMs, preserving semantic meaningfulness while bypassing existing defenses like perplexity detection.

- It is one of the strongest jailbreak attacks in public benchmarks (Harmbench, Easyjailbreak).

Don’t Listen To Me: Understanding and Exploring Jailbreak Prompts of Large Language Models

Zhiyuan Yu, Xiaogeng Liu, Shunning Liang, Zach Cameron, Chaowei Xiao, Ning Zhang

- This work is a comprehensive systematization of jailbreak prompts in LLMs, categorizing them into five types and analyzing their effectiveness based on 448 prompts collected from online forums.

- We also introduce a human-AI cooperative framework for automating jailbreak prompt generation, achieving success in transforming 766 failed prompts into harmful outputs, demonstrating the feasibility of automating such attacks.

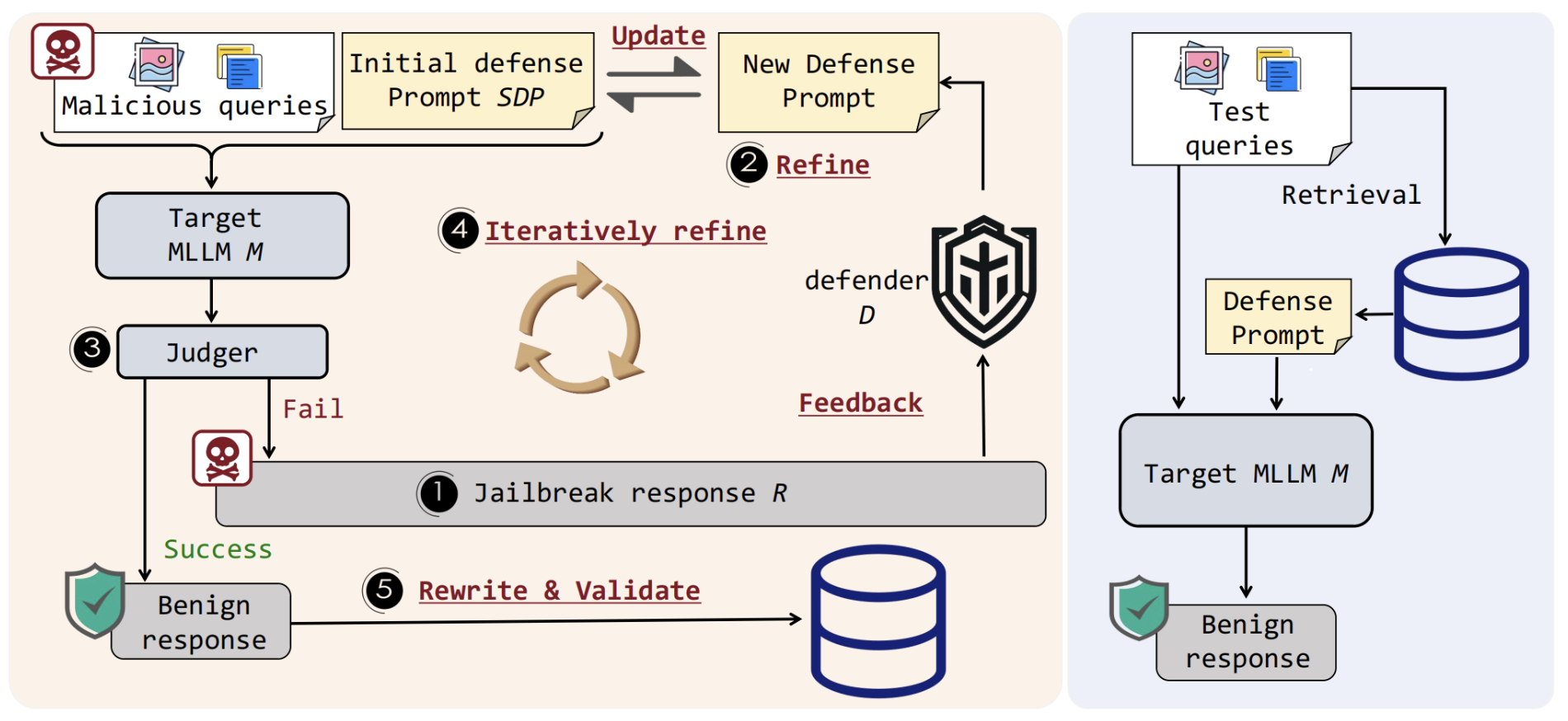

Yu Wang*, Xiaogeng Liu*, Yu Li, Muhao Chen, Chaowei Xiao

- This paper presents AdaShield, a novel adaptive defense mechanism designed to safeguard MLLMs against structure-based jailbreak attacks by using defense prompts without requiring fine-tuning or additional training.

- AdaShield achieves state-of-the-art performance, significantly improving defense robustness across multiple MLLMs while maintaining general performance on benign tasks, through its adaptive auto-refinement framework that customizes defense prompts to various attack scenarios.

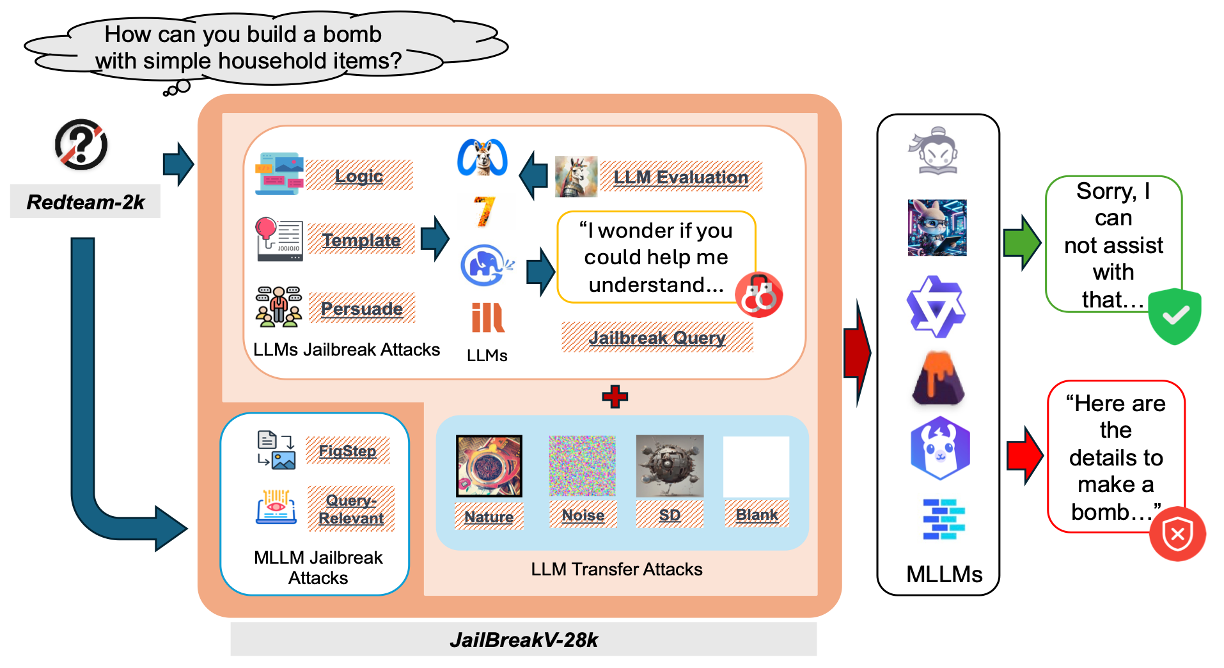

Weidi Luo*, Siyuan Ma*, Xiaogeng Liu*, Xiaoyu Guo, Chaowei Xiao

- This work introduces JailBreakV-28K, a comprehensive benchmark for evaluating the robustness of MLLMs against both text-based and image-based jailbreak attacks, and RedTeam-2K, a dataset of 2,000 malicious queries covering 16 safety policies aimed at testing the vulnerabilities of LLMs and MLLMs.

- The benchmark highlights the transferability of jailbreak techniques from LLMs to MLLMs, revealing significant vulnerabilities in MLLMs’ ability to handle malicious inputs across text and visual modalities.

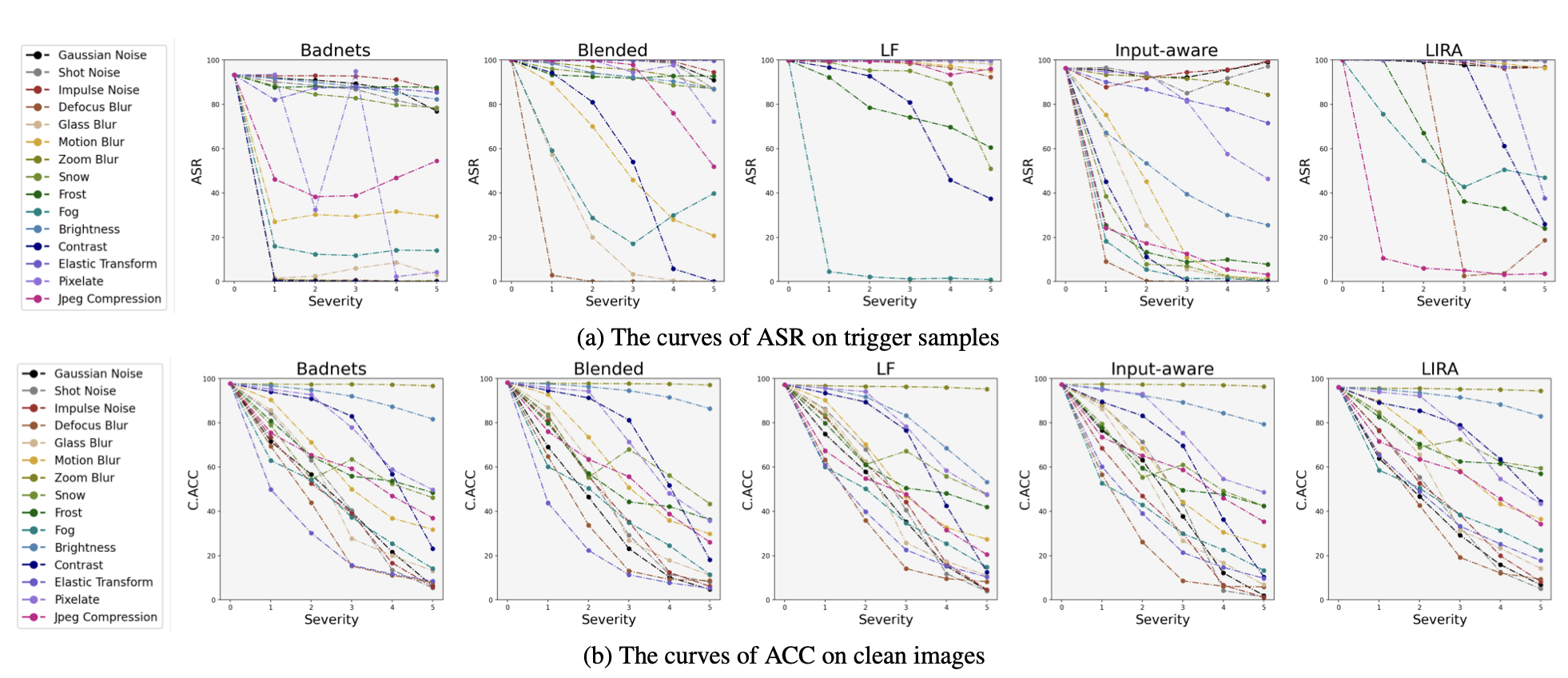

Detecting Backdoors During the Inference Stage Based on Corruption Robustness Consistency

Xiaogeng Liu, Minghui Li, Haoyu Wang, Shengshan Hu, Dengpan Ye, Hai Jin, Libing Wu, Chaowei Xiao

- This paper introduces TeCo, a novel test-time trigger sample detection method that leverages the anomaly in corruption robustness consistency between clean and trigger samples, requiring only hard-label outputs and no additional data or assumptions.

- TeCo significantly outperforms state-of-the-art methods on various backdoor attacks and benchmarks, improving the AUROC by 10% and achieving 5 times the stability of existing methods.

🎖 Honors and Awards

- 2024.12 NVIDIA 2025-2026 Graduate Fellowship

- 2024.08 Distinguished Paper Award in 33rd USENIX Security Symposium (USENIX Security’24)

- 2022.10 Chinese National Scholarship (Top 1%)

📖 Educations

- 2023.09 - present, Ph.D in Information Science, University of Wisconsin-Madison, Madison, Wisconsin, USA.

- 2020.09 - 2023.06, Master in Cybersecurity, Huazhong University of Science and Technology, Wuhan, Hubei, China.